|

The method statistics report looks like this:

We can also see how many times a particular method was called. Like JMeter, but at the method instead of the API level, we can see average, maximum, and minimum runtimes. The results are summed up for the whole call tree. “Profiled mode” works by adding instrumentation to selected classes, recording method invocations, how long they took, and what other methods were called. There are major advantages and drawbacks to both, and understanding the differences is a key element to gathering usable data. JProfiler has two major modes of analyzing CPU time: profiled and sampled. It is primarily used to look for algorithmic issues. It should be readily apparent that profiled data cannot be used to establish or validate SLAs.

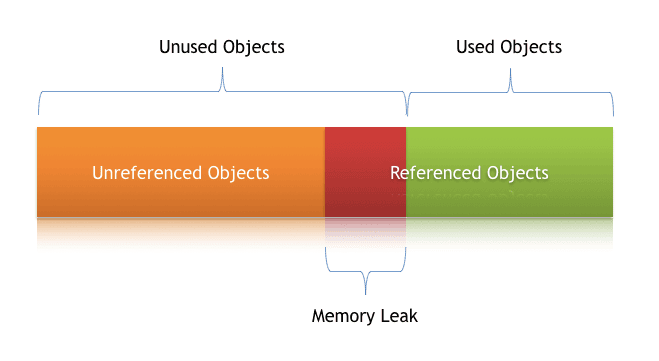

Due to hardware constraints and our testing goals, 90 percent of my tests were single-threaded sequential requests, often made against the same datasets. We run our tests against a development environment, which means the performance of the services we depend on are unpredictable and certainly not reliable simulations of their production counterparts. That depends on how it’s used exactly, but more on that later. Therefore, it negatively impacts performance. We do not use JProfiler in our production environment because it does its magic by modifying the JVM and taking measurements from the runtime environment. It can also be used to identify memory issues, but we didn’t use it for that purpose in this particular exercise. JVM profiling by and large, aims to identify long-running or redundant method invocations.

I just completed a long analysis of our V2 API using JProfiler for API performance testing and want to spend some time - ok, the lion’s share of this post - discussing what we learned from this analysis.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed